AMTSO Testing Standards: Why You Should Demand Them

When it comes to security product testing, a good test in one context can turn out to be meaningless in another.

Have you ever done an internet search for “the best x product”? These searches always produce results, and sometimes you may even follow the advice listed. However, did you ever stop to consider the source and how they made their determination?

Many of those list rankings are based on money paid by vendors. Would knowing that influence your impression of that list? That’s why accessing the information that went into compiling a list is just as important as the list itself.

Security product testing has been around for as long as there have been security products. On the surface, the process for testing security products would appear to be pretty straightforward – feed in a large collection of clean and malicious files and see which are detected and which are not. However, as H.L. Mencken stated, “For every complex problem there is an answer that is clear, simple, and wrong.”

Testing security software badly is simple. Testing security software well is hard. Today’s threats are extremely complex, and as a result, the products that protect against them must be equally complex. Handling these complexities well throughout the course of a test should dictate how one processes the results.

How then does one determine whether a test was done well or badly? The answer is information. The consumer of a test result must be armed with sufficient information to draw the proper conclusions. This includes both the quality of the testing as well as the meaningfulness of the results. A good test in one context can be meaningless in another. It’s important to look at the test holistically.

What Standards Do and Don’t Do

The Anti-Malware Testing Standards Organization (AMTSO) is a non-profit organization founded in 2008 to improve the business conditions related to the development, use, testing and rating of anti-malware products and solutions. These Standards ensure that relevant information about the test and the participants be disclosed. This includes:

- What did the vendors have to say about the methodology?

- Which vendors (if any) had a chance to configure their product?

- Which vendors (if any) had the opportunity to dispute their results?

AMTSO will manage the process and determine if a test is compliant with the standards, which can be found here.

It’s important to keep in mind that a test’s compliance with the Standards doesn’t speak to the quality of the testing or the meaningfulness of the results. The Standards are supremely important because they provide the data necessary to make determinations regarding the quality or relevance of the test including information about how the participants in the test were treated, who had additional access, who got to configure their product, who got to dispute their results, etc.

The Standards require that these facts be presented. Without them, you are relying on the judgement of others, and the conclusions they reach for you.

Who Do You Trust?

In my experience, most people trust the testers over the vendors. This seems reasonable, as the vendors are not disinterested parties. But the standards enable you to trust yourself. This is because in a compliant Standards test you will see the underlying facts, including the arguments of the vendor and the tester in the event of a dispute. You can weigh those arguments and reach your own conclusion.

The AMTSO Standards lift the lid and allow the consumer to examine the inner workings and make their own judgements.

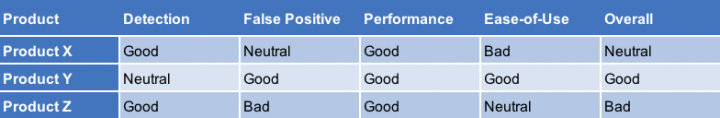

For example, let’s say a test has several categories – good, bad and neutral. They assess multiple products and categories as such:

Is this a good test? Is it useful? Which product should you buy? What defines good, bad and neutral? Is it the same for all categories? Without more information, it’s impossible to tell. This is where the AMTSO Standards can help. They ensure that the details of the testing and the scoring are available to the vendors and the consumers of the test, and that reasoned commentary regarding relevant issues is included and can be evaluated by consumers. Of course, not all consumers will agree – but each one can make their own assessment from the same data.

Know the Facts

When a consumer reads a test report there are a number of important questions they should ask, including:

- Methodology:

- What is the purpose of the test? What does it intend to show?

- Does the methodology support this?

- Are the chosen products correct given the scope of the test?

- How were those products configured?

- What is the environment of the test?

- Is there internet connectivity?

- Are the clients managed/unmanaged?

- Test cases:

- What test cases were used?

- How were they obtained?

- How was their maliciousness/functionality verified?

- How was detection determined/verified?

- What clean test cases where used, and how were they obtained?

- How were the samples – both clean and malicious – introduced to the products?

- Results:

- How were the results monitored and verified?

- Was there a sample dispute process?

- Who was allowed to participate?

- Who participated?

- How were the final scores calculated?

If the answers to these questions are not available in the test report, that should be cause for concern.

Claims and Counter Claims

Here is a simple scenario:

- The tester obtains a sample of a malicious downloader (a piece of malware that downloads additional malicious content from the internet)

- The tester introduces this sample into the testing environment

- The product installed in that environment fails to generate an alert and fails to remove the sample

- The tester claims the product failed to protect the machine

Is that claim correct? We’ll need some additional data to know for sure. For example, if the internet location of the sample to download the content provided was no longer operational, that would change our response.

In this case, it is true that the product failed to detect the sample statically – but that is not what was claimed. The behavior that the security product saw was an application launch, attempt to access the internet, and exit. Did anything malicious happen here? If a clean sample took the same actions, should it be detected? Was the machine compromised? If that action had happened would the product have detected the sample? Without more information, we don’t know these answers but that doesn’t mean that the product failed to protect. If a bank robber walks into a bank, then walks out, was the bank robbed? It is more complicated than simply detecting a file or not.

Why You Should Demand Standards-Compliant Tests

Let’s take a look at another testing scenario:

- The installation media for several brands of security software are each taped to standard office chairs

- The chairs are tossed from the top of a 10-story building

- The product attached to the chair suffering the least damage is deemed the best security software

It may sound ridiculous, but this test can be completed in an AMTSO-compliant way. The Standards require the tester to describe the methodology in detail and seek commentary from the products involved. In this scenario, the vendors would likely respond that this was a meaningless test – and the tester would need to include that commentary in the published report.

The reader is tasked with drawing a conclusion based on the methodology and comments provided by the vendors. However, if I had only told you that we had tested the security products “in a stressful scenario and used advanced analytics to assess each product’s performance,” the reader would not have known to dispute that claim.

AMTSO Standards are not only useful but necessary. They make the security software space more transparent and honest and also expose poor methodologies and dubious claims made by both testers and vendors. Overly broad claims get challenged and consumers have the information necessary to make informed choices. That is a key component in achieving better, more meaningful tests.

We encourage you to share your thoughts on your favorite social platform.